This post is also available in Dutch.

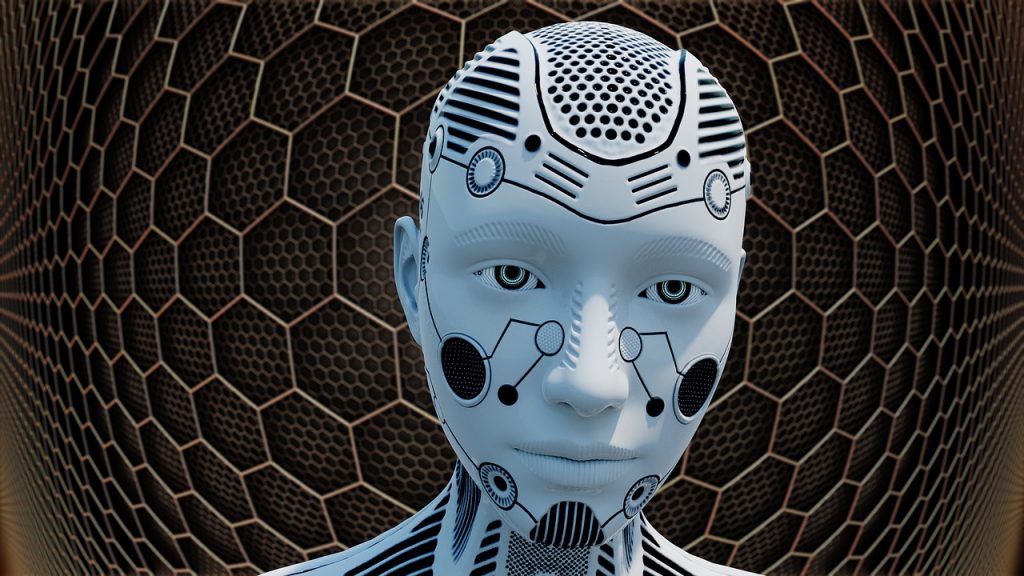

Machines are becoming smarter day by day, to the point that one day you may have a robot as a co-worker. How will we manage such a change?

Smart machines are increasingly present in our lives. We rely on the devices in our pockets for almost anything, from learning the weather forecast to setting up a date. They are also becoming increasingly smarter. So, it’s not surprising to imagine that smart machines like robots might soon become our co-workers with whom we will have to work shoulder to shoulder. But how would you work in a team with a robot co-worker that is not very collaborative, and is trash-talking you? Would you take it less seriously, given that it’s a robot and not a human?

Apparently, we are inclined to take feedback from robots seriously. What a robot says matters so much that people start performing worse in a game when they are smacked-talked by a robot. In a recent study, participants played a strategy game called “Guards and Treasures” against Pepper, a commercially available humanoid robot. Whereas some participants were praised by Pepper, others received negative remarks, such as “I have to say, you are a terrible player”. Over the course of the game that had 35 rounds, all players improved their performance, but the ones that were criticized by Pepper improved less. From other research we know that rude behaviors do affect an individual’s performance on a task in negative ways by, for example, causing a psychological distress. So, these findings might suggest that Pepper’s negative feedback managed to affect some players emotionally.

Researchers who conducted the study stressed its novelty: rather than exploring how humans and computers could collaborate, the study focused on a scenario where things might go astray. While it is true that the context in this study was somewhat different than in many studies in human-computer interaction, the findings point to the same conclusion as in countless other studies. Participants reacted to the robot’s insults the same way they would have done if a real person had uttered them. Why does this happen?

We take interactions with robots seriously because we assign intentions and motives, two important aspects of humanness, to anything that seems somewhat human-like. Pepper could hardly be confused with an actual human but it has two eyes and a mouth, and it can talk and this is pretty much enough for us to start treating it like a human. Our tendency to attribute humanness to others has an upside: we come to trust entities that look similar to us. This is important if we consider that, in the not-so-distant future, smart machines might be performing the duties of a teacher or a caregiver for the elderly. So, there is a good chance that humans would trust robots and comply with their requests.

Training a robot to be polite is an achievable goal. But there are situations in which humans too, can misbehave. Humans are masters of trash-talking and trolling, especially online. A case in point occurred in 2016, when Microsoft introduced a chatbot named Tay, who was given a Twitter account and was supposed to learn from interactions with humans. The bot was taken down less than 24 hours following its release, after it became racist and offensive.

These examples of both robots and humans showing disrespect, though somewhat extreme, suggest that learning to co-exist with non-biological intelligence is not going to be easy. Some rules of conduct will need to be invented. Will smart machines and people stick to them?

Original language: English

Credits

Author: Julija Vaitonyte

Buddy: João Guimarães

Editor: Rebecca Calcott

Translator: Felix Klaassen

Editor Translation: Wessel Hieselaar

Featured image by Pete Linforth via Pixabay (license)