This post is also available in Dutch.

Human vision is among neuroscientists’ favorite topics of study. It seems so simple: put a participant in a brain scanner, show them an image and observe which brain regions are activated. However, this approach is slowly getting outdated. Time for a new technique: decoding.

Illustration by Pim Mostert.

Illustration by Pim Mostert.

Brain image by Bertyhell (CC BY-SA 3.0). Head image by Mouagip (public domain).

Measuring brain activity

The introduction of the first brain scanners in the 90s enabled us to develop a unique view on the brain’s functioning. Brain scanners allow us to measure which brain regions are active and at what time, making them an ideal tool for studying what the brain is doing while someone is observing the environment. The idea is simple: we change the visual input – for example by presenting images of various objects – and simultaneously measure which brain regions become active.

Blobology

We’ve learned a lot using this approach. For example, we know there are specific brain regions that respond strongly to images of faces, whereas other areas respond specifically to houses or cars. Elementary patterns, such as geometrical shapes or simple lines, also have their own brain regions. Taken together, this research has led to a kind of ‘functional map’ of the brain, describing which areas are involved in different tasks.

Although these are valuable insights, they also raise questions. Do we now really know how the brain processes the signals that come from the eyes? We now know where in the brain visual information is processed, but not how this is done. Research with such a strong focus on the where-question is sometimes mockingly referred to as ‘blobology,’ after the common images of the brain showing colorful ‘blobs’ of activity.

Decoding brain activity

How can we learn more about the question of how the brain processes information? One possible way is a technique that has become increasingly popular among neuroscientists over the past few years: the ‘decoding’ of brain activity.

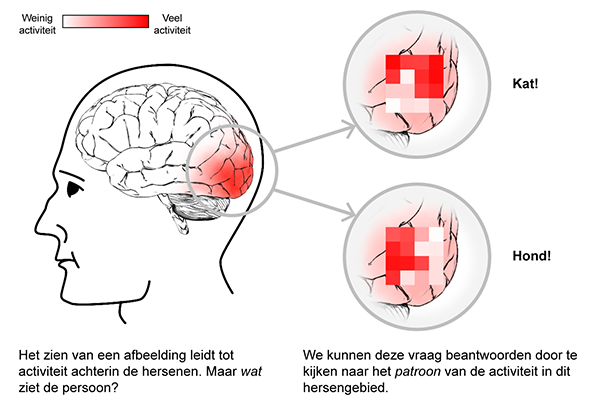

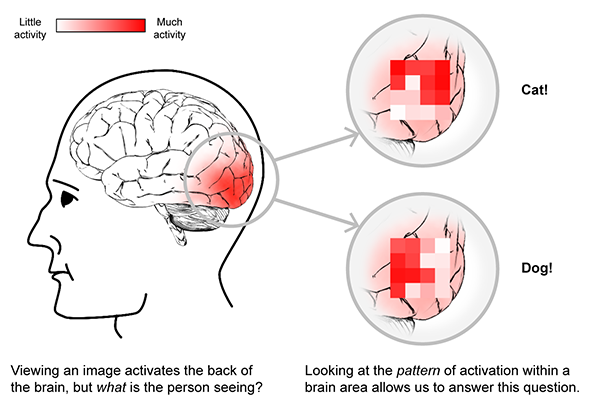

Here’s the idea. Suppose you present a participant with a picture of a dog, followed by a picture of a cat. Naturally, the person’s perception is different in these cases – after all, dogs look different from cats. Therefore, we should also observe differences in brain activity between the two pictures. However, these differences may not be observable in terms of the location of the brain’s activation, as these may be similar for dogs and cats. Instead, it turns out that the pattern of activity within a given brain region can vary according to the type of animal one sees. This means there is a pattern of activity associated with seeing cats and a different pattern that goes with seeing dogs, even though both activity patterns take place within the same brain region.

Deciphering a pattern of brain activity is called decoding. Once you have found the right code, it’s possible to work backwards: when we observe pattern X in our brain scans, was the participant looking at an image of a dog or a cat? Thus, by decoding brain activity we can tell what a person is seeing!

This blog was written by Pim Mostert. Pim is a PhD student in the Prediction and Attention group at the Donders Institute. His research centers around visual perception and the role of predictions in perception. He’s into jazz and cats.